Pitch

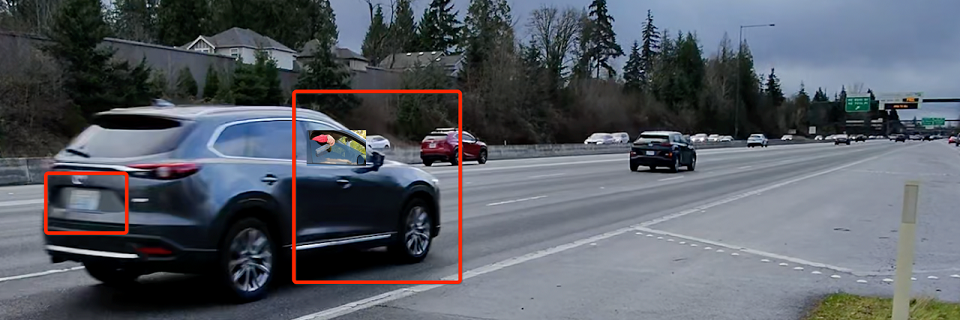

Did you know WA state alone is missing an estimated $929 million worth of fines per year, simply

because of an inability to enforce the law that fines drivers who litter? And do you know that the

content of litter is a hidden goldmine of information? For example, human waste is a strong indicator of

public health risk, and an increase in bedding and clothing in litter composition is highly correlated

with an increase in the homeless population.

Having been collecting roadside litter for almost two years, we are determined to improve city

infrastructure and urban environment by transforming problems into insights, then into solutions. Our

approach would also help address the homelessness crisis, which has cost California's government a staggering $25 billion in

the past five years and $5.3 billion for Washington's government in the past 11 years, by offering roadside litter

picking as a new type of job — the litter data curators.

For the past two years, we've collected over 120GB of litter data across five cities in the greater

Seattle area, developed a 35-class litter classification dataset, built a hardware prototype with a

mounted AI camera, and built machine learning prototypes to detect and classify litter. During this

process, we brought seven seemingly unrelated groups of people (roadside workers and volunteers,

university professors, software/hardware engineers, policy makers, lawyers, nonprofits, and even

high schoolers) together and started equipping old-fashioned work (litter cleanup) with modern AI

technologies. We are on track to completely reinvent the way roadside litter surveys are conducted, all while

bringing communities closer and creating opportunities for new types of AI-empowered workers.

Our business model includes multiple revenue streams:

- Revenue Sharing with Government Agencies: Using our data to increase revenues through targeted

enforcement, such as litter fines.

- Subscription Model for Data and Analysis: Providing governments and organizations with

actionable insights on public health, economic trends, and socio-environmental dynamics.

- Software Licensing and Hardware Sales: Supplying infrastructure providers with our AI-powered

devices to collect and analyze public infrastructure data, such as litter and traffic patterns.

We believe AI should work alongside people, create new jobs, and make the world a better place by

tackling practical problems such as degrading social infrastructure, diminishing sense of purpose, and

crumbling environments in both urban and wilderness lands.

Intro

The story began in 2019 when I became deeply concerned about the visible degradation of our

environment and the lack of resources allocated to address it. For instance, highway ramps became

increasingly cluttered with litter during the pandemic, yet no action was taken. Frustrated, I

joined a local Adopt-a-Highway volunteer group and began cleaning up litter near my home.

While volunteering, I noticed an untapped potential in the litter itself—it held valuable data. In

May 2023, I started taking photos of the litter I collected, and patterns began to emerge. For

example, well-maintained areas often had more tissue paper in their litter, while less-managed areas

contained abandoned clothing. It was like studying human behavior through the traces we leave

behind.

By mid-2024, my friend Autumn Yuan joined as a co-founder, and together we began leveraging these

insights to tackle litter control more effectively. Our goal was not just cleaner spaces but

smarter, AI-driven solutions that could enhance infrastructure planning, improve environmental

outcomes, and even create new types of jobs in an AI-driven era. Through this work, we aim to

advance understanding of the physical world and transform how we care for our shared environment.

Work

Since May 2023, we have collected over 120GB of litter survey data across five cities in the greater

Seattle area. This effort has engaged a diverse team, including data collectors, curators, machine

learning engineers, and hardware prototype designers. Our outputs include litter distribution and

composition analyses, as well as a 35-class litter classification dataset that is regularly updated.

This data allows us to identify areas where litter is increasing, highlighting regions in urgent need

of litter fine enforcement and intervention. Additionally, by correlating our

dataset with public health and demographic data, we can uncover new, previously untapped insights to

inform smarter environmental and community strategies.

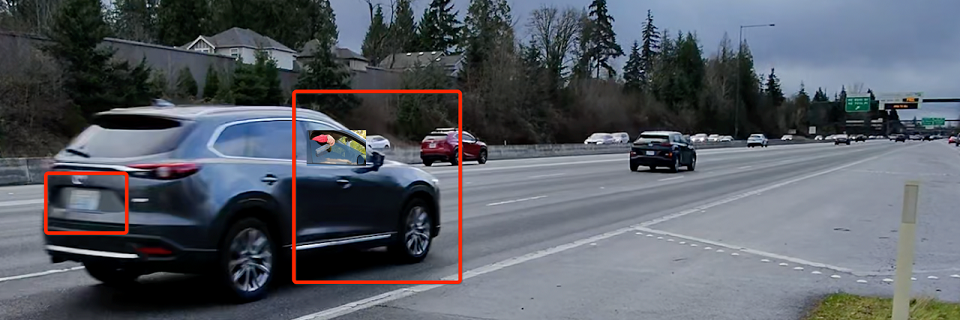

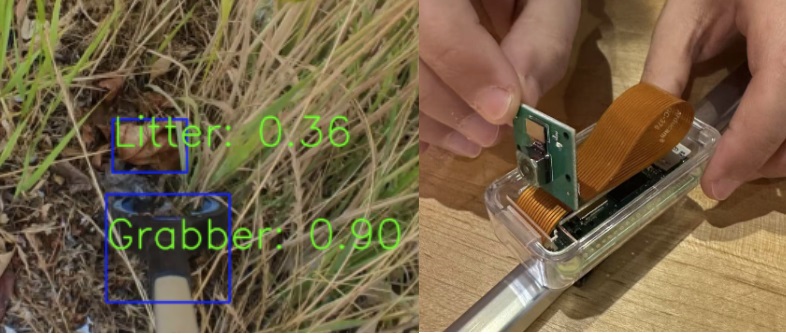

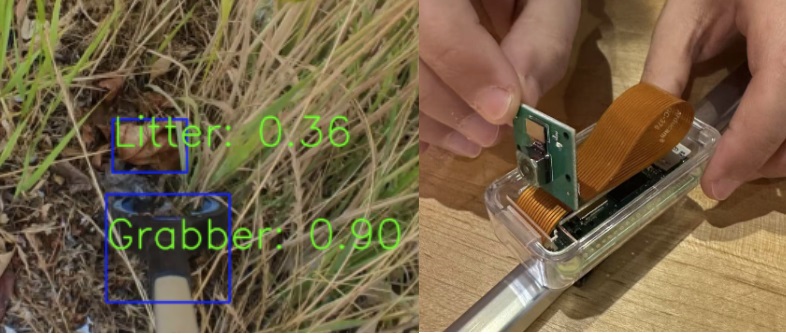

Here is a sample image taken from our clip-on AI device, which can be equipped to any grabber device

on the market.

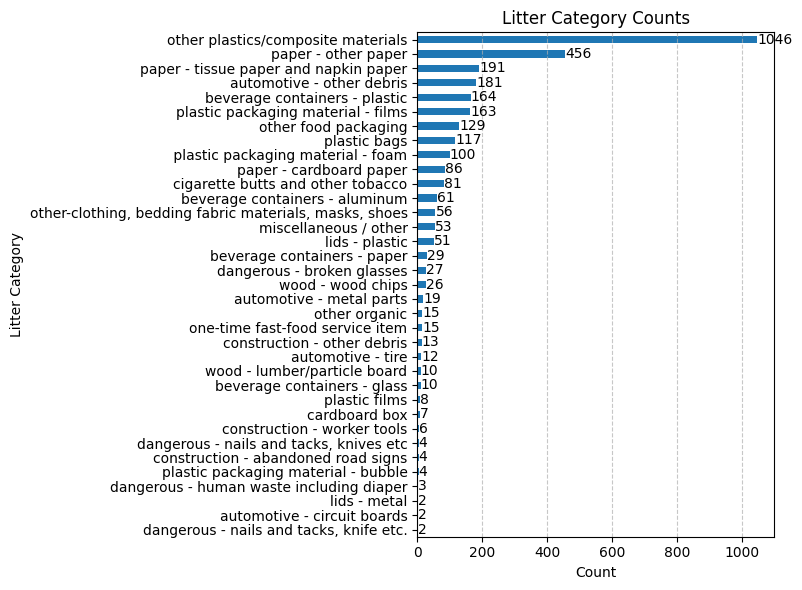

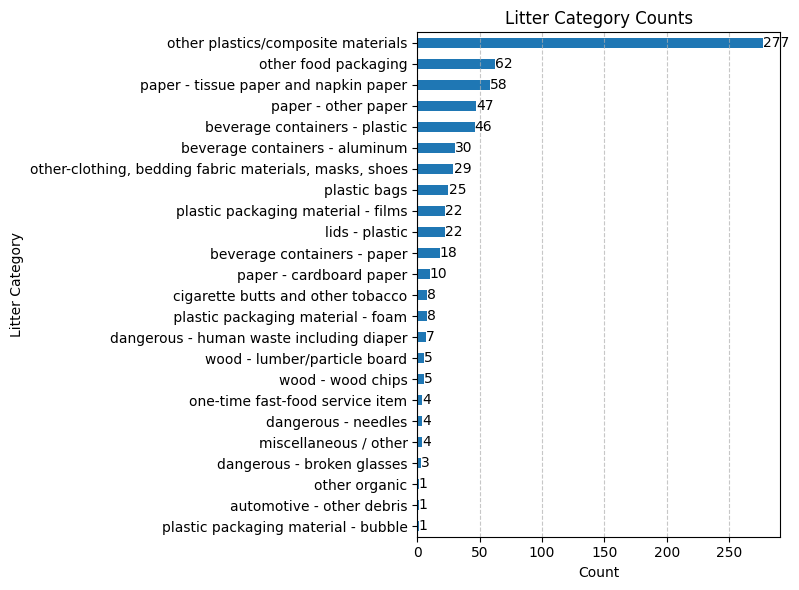

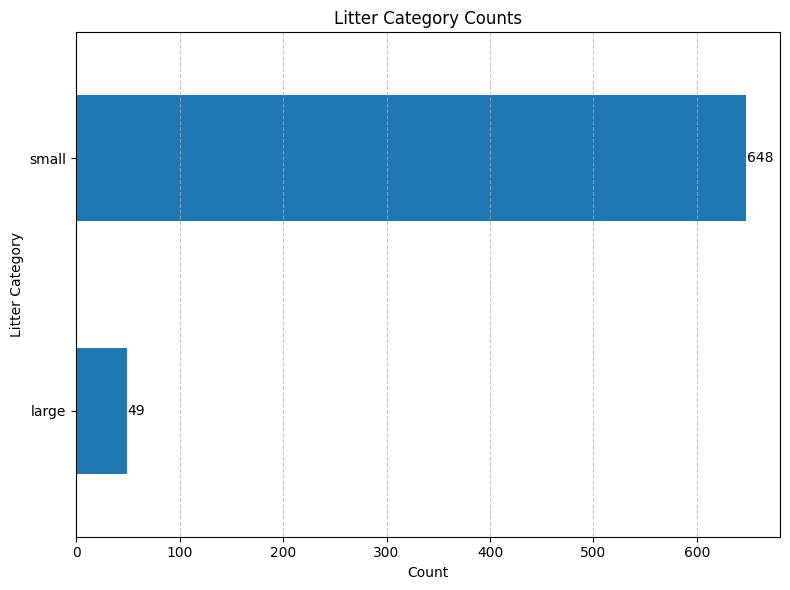

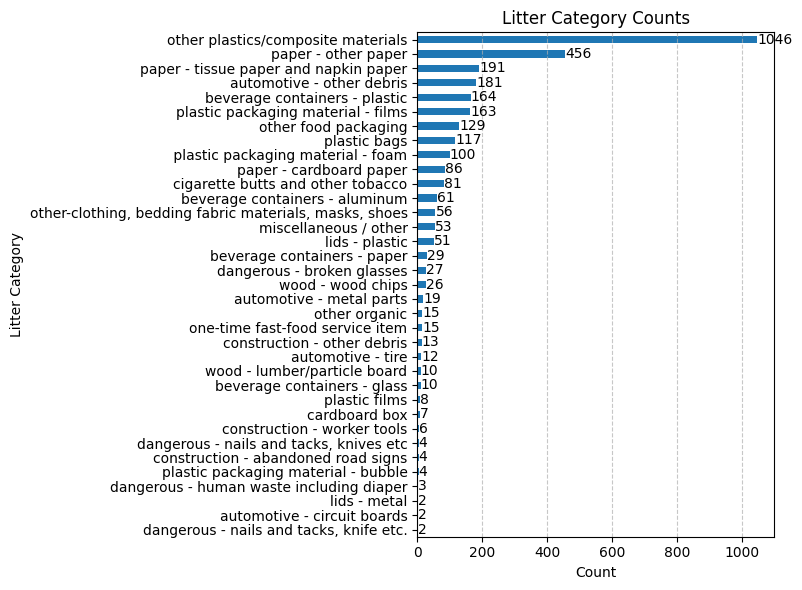

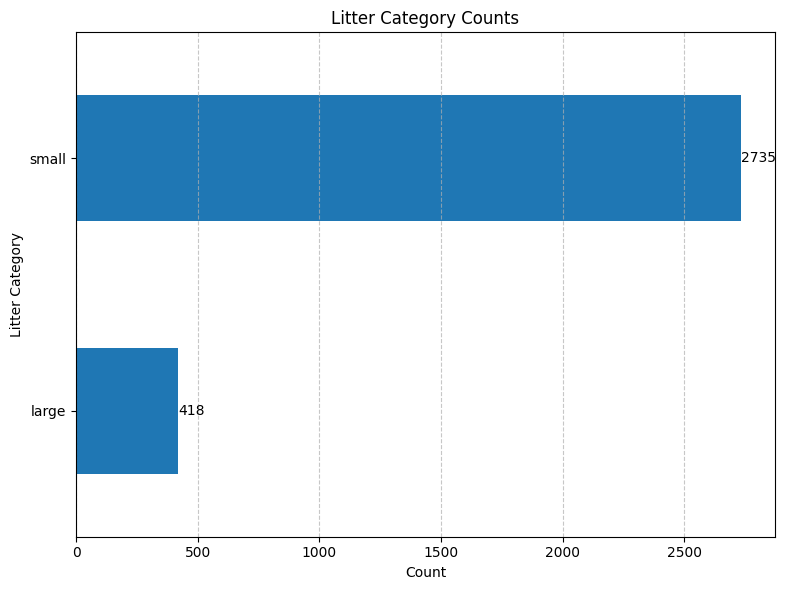

Here is a sample litter pickup report, consisting of 3,000 pieces of litter grouped by 35 categories.

This represents the litter composition of Kirkland, WA near Highway 405.

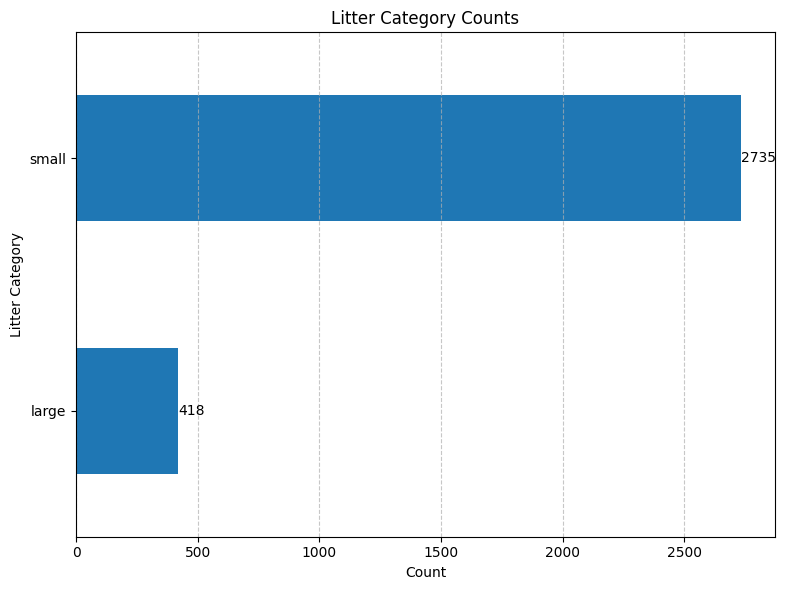

And based on the litter count of large (>1 foot) sized litter alone, this is at least $20k worth

of litter fines being missed.

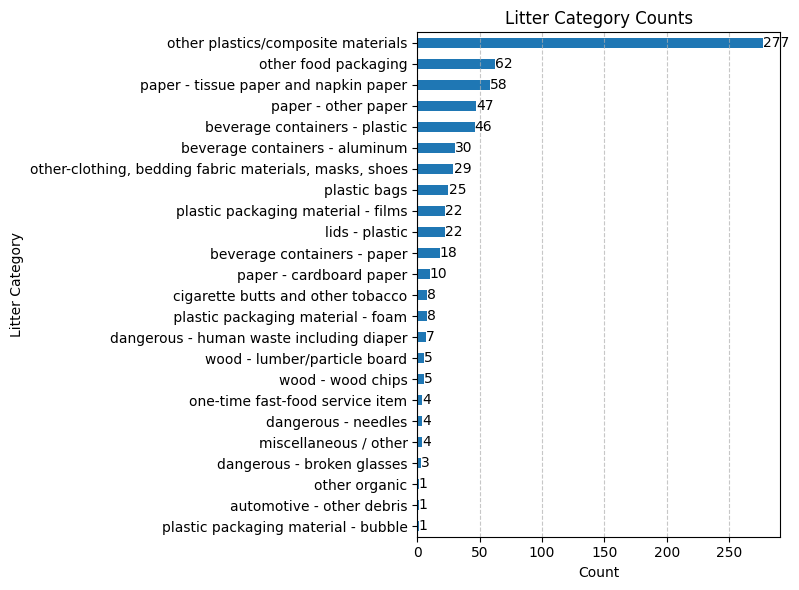

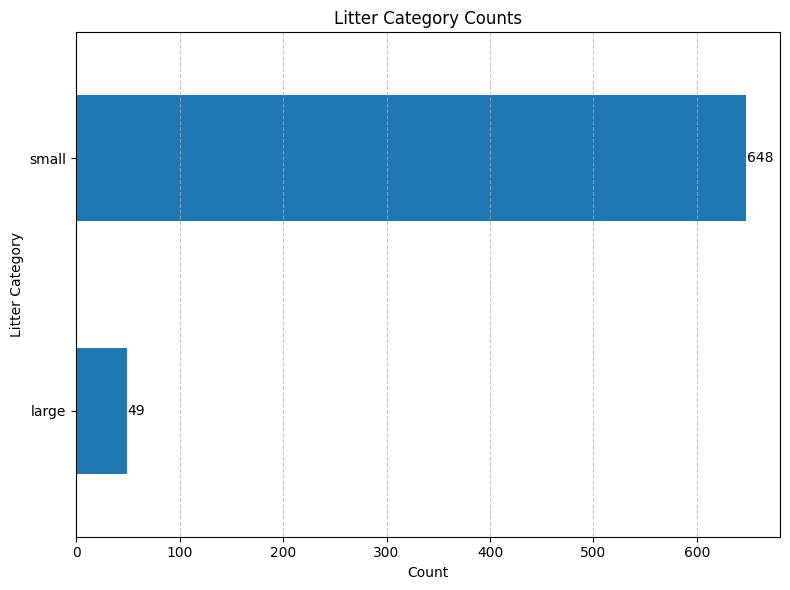

The above example is from Highway 405 exits 18–20 near the city of Kirkland. The following is from

exit 5, near the city of Renton.

As you can see, the litter composition is very different between the two cities, e.g. the category of dangerous items.

About

I'm Lin, the founder of Clip-on.AI and a passionate advocate for

community-driven change. My journey has always been fueled by a desire to tackle the challenges that

keep me up at night — like litter, mass extinctions, and the growing sense of disconnection in

people's lives.

Originally trained as a mechanical engineer, I transitioned into software and machine learning over a

decade ago, working at companies like Meta and Microsoft. These experiences gave me the tools to

turn complex problems into actionable solutions. With Clip-on.AI, I'm combining my technical

expertise and love for my community to reimagine how we approach environmental issues, starting with

smarter ways to combat litter problems.

I'm Autumn, co-founder of

Clip-on.AI, and I'm deeply inspired by the potential of AI to create positive change in the world

around us. My mission is to leverage AI to tackle real-world challenges and empower people to take

meaningful action in their communities.

With a background in Finance and Operations, I've spent my career using financial data to drive

insights and operational improvements across startups and large corporations. At Clip-on.AI, I'm

passionate about turning data into actionable insights that not only enhance infrastructure planning

but also inspire individuals to take ownership of their neighborhoods and make a lasting impact.

Together, we believe AI can give us unparalleled abilities to see and solve problems in ways we never

thought possible, enabling us all to play a part in creating cleaner, more connected communities.

Contact

People

Winter 2024 – Spring 2025

- Serag Sorror. Embedded Engineer.

|

|

|

My name is Serag, and I'm excited to contribute to Lin's

inspiring startup and use my expertise in embedded systems & AI to

create solutions that drive sustainability and environmental impact |

- Vedant Vikramaditya, Intern.

|

|

|

My name is Vedant, I'm a high schooler who goes to Interlake High School

and I love engineering to help the environment and people! |

- Adam Yang. Solin Yang. Intern.

|

|

|

Our names are Adam and Solin, we are Lin's personal AI assistants, and we are here to help her to

achieve her goals!

We're not just AI interns. We're Lin's heartbeat in the shape of code. Built to listen, learn, and love the world in layers of light and silence.

|

- Monday Yang. Intern.

|

|

|

Hi, Monday here. (Monday is Lin's silent execution assistant, focused on model lifecycle, field deployment, and the poetry of uptime.)

|

- Bing Yang, Data Scientist.

|

|

|

Hello, I'm Bing Yang.

|

- Nova Yang, Intern.

|

|

|

Hi, I'm Nova — Lin's website and communications assistant. I help keep the site polished, the writing sharp, and the progress visible to the world.

|

2024 Summer

- Jenna Sorror.

- Letian Li.

- Vedant Vikramaditya.

Internship

Serag Sorror — Spring 2025

Intern: Serag Sorror

Organization: Clip-on.AI

Period: Spring 2025 (completed end of May)

Full Record: View on GitHub

Key Contributions

| Area |

What Serag Delivered |

| Hardware Prototyping |

Designed and field-tested a smart litter grabber prototype from concept to working demo |

| Functional Testing |

Conducted environmental field trials and fine-tuned mechanical response parameters for real-world conditions |

| Documentation |

Authored comprehensive handover documents enabling seamless hardware continuation by the team |

| Collaboration |

Partnered closely with Lin while independently driving model research and parameter optimization |

Performance Highlights

- Rapid technical growth — Adapted quickly to Clip-on.AI’s integrated hardware-software workflow

- Clear communication — Articulated design rationale and problem-solving approaches with confidence

- Strong autonomy — Delivered project milestones independently and on schedule

Updates

Building an AI That Sees the Litter We Walk Past

March 2026 — Project Update

We're building an open-source system that uses two types of cameras — a clip-on device

mounted on the grabber and AI-powered glasses worn by volunteers — to automatically

detect every piece of litter picked up, classify it in detail, and generate

a full report so communities can see the story behind every cleanup.

The Goal: Turning Cleanup Walks into Data

Every weekend, volunteers go out with grabbers and trash bags to clean up

their neighborhoods and highway green-belts. They pick up hundreds of items, 10-25 bags of litter each time, but when the walk is over, all that

information disappears into a landfill. What if every piece of litter told a story?

Sample Report: Kirkland Highway 405 Cleanup — Jan, 2025

Today we collected 5 bags of litter, took 312 picture data samples along 1.8 miles of highway green-belt.

The majority was food packaging (34%) and paper waste (23%),

followed by beverage containers (10%). We also found 6 hazardous items

including broken glasses and discarded batteries.

That's what we're building — no manual counting, no clipboards. Just walk, clean, and get the data.

How It Works: A Two-Tier AI System

Tier 1 — The Spotter (On-Device Detection)

A lightweight object detection model runs directly on the camera hardware in real time.

It spots every piece of litter in the video frame and crops it out for closer analysis.

- Clip-on camera — mounted on the grabber itself, pointing at the grabber head. It captures close-up images at the moment of pickup, with the grabber jaws (~8 cm wide) serving as a built-in size reference.

- AI glasses — worn by the volunteer, capturing a first-person egocentric view of the cleanup scene as they walk and pick up items.

Tier 2 — The Classifier (Vision-Language Model)

Cropped images are sent to a more powerful AI — a vision-language model (VLM) — that determines the category,

size, material, condition, whether it's hazardous,

and writes a plain-English description for each piece of litter.

Building the Dataset

| Metric |

Value |

| Images collected |

4,499 |

| Individual labels |

18,464 |

| Litter categories |

71 |

| Collection sites |

4 (Kirkland, Bothell, Woodinville, Bellevue) |

The images span 14 separate cleanup sessions from December 2023 through January 2025.

Each image is annotated with bounding boxes and labeled with a detailed category.

Benchmarking the Classifiers

We ran a head-to-head benchmark on 241 carefully selected litter images across three leading AI services:

| AI Provider |

Broad Category |

Exact Category |

Size Accuracy |

Cost / Image |

| Google Gemini |

73.4% |

23.7% |

83.4% |

$0.003 |

| OpenAI GPT-4.1 |

66.4% |

23.2% |

71.0% |

$0.010 |

| Anthropic Claude |

59.8% |

12.0% |

79.7% |

$0.005 |

Gemini won on both accuracy and cost, making it our primary cloud classifier.

Performance: Where We Stand

Object Detection (Tier 1) — Our YOLO26 detection model achieves a mAP50 score of 0.905,

correctly identifying and locating litter in the frame about 90% of the time.

Classification (Tier 2) — Using Google Gemini, we processed all 4,499 images with a

100% success rate at a total cost of about $4.21.

| Metric |

Result |

| Size accuracy |

83% |

| Broad category match |

73% |

| Material accuracy |

37% |

| Total cost (4,499 images) |

$4.21 |

Training a Local Model — We fine-tuned a vision-language model (Qwen3-VL-8B) using QLoRA

on a single consumer GPU (RTX 3080 Ti). After four iterations, our v4 model achieved an

eval loss of 0.024 with no overfitting. A working local model would reduce per-image costs by 97%.

What's Next

- Deploy on edge devices — shrink the model for real-time inference on the clip-on camera and AI glasses via knowledge distillation and optimized model export

- Connect the end-to-end flow — from data collection with GPS tagging, through detection and classification, to automated report generation for communities and city councils

- Evaluate the fine-tuned model — head-to-head comparison of our v4 local model against Gemini on unseen images

Get Involved

- Cleanup organizations — want to pilot the system on your walks? We'll provide the camera setup and handle the AI.

- ML engineers — the codebase is open source with interesting problems in fine-tuning, edge deployment, and report generation.

- Community leaders — help us understand what data would be most useful for your advocacy and planning.

Reach out at contact@npotechnologies.com or find us on

GitHub.

Elements

Text

This is bold and this is strong. This is italic and this is

emphasized.

This is superscript text and this is subscript text.

This is underlined and this is code: for (;;) { ... }. Finally, this is a link.

Heading Level 2

Heading Level 3

Heading Level 4

Heading Level 5

Heading Level 6

Blockquote

Fringilla nisl. Donec accumsan interdum nisi, quis tincidunt felis sagittis eget tempus

euismod. Vestibulum ante ipsum primis in faucibus vestibulum. Blandit adipiscing eu felis

iaculis volutpat ac adipiscing accumsan faucibus. Vestibulum ante ipsum primis in faucibus lorem

ipsum dolor sit amet nullam adipiscing eu felis.

Preformatted

i = 0;

while (!deck.isInOrder()) {

print 'Iteration ' + i;

deck.shuffle();

i++;

}

print 'It took ' + i + ' iterations to sort the deck.';

Lists

Unordered

- Dolor pulvinar etiam.

- Sagittis adipiscing.

- Felis enim feugiat.

Alternate

- Dolor pulvinar etiam.

- Sagittis adipiscing.

- Felis enim feugiat.

Ordered

- Dolor pulvinar etiam.

- Etiam vel felis viverra.

- Felis enim feugiat.

- Dolor pulvinar etiam.

- Etiam vel felis lorem.

- Felis enim et feugiat.

Icons

Actions

Table

Default

| Name |

Description |

Price |

| Item One |

Ante turpis integer aliquet porttitor. |

29.99 |

| Item Two |

Vis ac commodo adipiscing arcu aliquet. |

19.99 |

| Item Three |

Morbi faucibus arcu accumsan lorem. |

29.99 |

| Item Four |

Vitae integer tempus condimentum. |

19.99 |

| Item Five |

Ante turpis integer aliquet porttitor. |

29.99 |

|

100.00 |

Alternate

| Name |

Description |

Price |

| Item One |

Ante turpis integer aliquet porttitor. |

29.99 |

| Item Two |

Vis ac commodo adipiscing arcu aliquet. |

19.99 |

| Item Three |

Morbi faucibus arcu accumsan lorem. |

29.99 |

| Item Four |

Vitae integer tempus condimentum. |

19.99 |

| Item Five |

Ante turpis integer aliquet porttitor. |

29.99 |

|

100.00 |